If you want to see where business AI is heading, don't look at ChatGPT. Look at OpenClaw.

ChatGPT is what you already know: a chat window that answers very well. OpenClaw is something else. An assistant that lives on your computer, that speaks through the channels you already use (WhatsApp, Telegram, Slack), that has real tools to act on your system, that can wake up on its own to do scheduled work, and that can have its own identity to act "on behalf of" a person inside a company. The difference is not cosmetic. It is categorical.

This article is about that. What OpenClaw is, where it actually stands, where it makes sense today and where it doesn't, and why it's worth understanding even if you don't end up installing it.

My take, no fluff

Today, at Nexe Labs, I would not recommend OpenClaw as a standard autonomous-agent layer for an SME. The system is powerful, but it is still too live, has too much configuration surface, and too much risk of tying a commercial promise to a project that is still consolidating.

That said, ignoring it would be a mistake. OpenClaw is the best available window into how the enterprise agents we will be using a year or two from now are going to work. Whoever learns now to operate cautiously in this world will arrive better prepared when the category matures.

What OpenClaw actually is

OpenClaw is an open-source personal AI agent, MIT-licensed, that runs on your own devices. The creator is Peter Steinberger, the Austrian developer who founded PSPDFKit. The project started in November 2025 (originally called Clawdbot, in reference to Anthropic's Claude; after a trademark complaint it was renamed to Moltbot and finally to OpenClaw). The official repository has over 372,000 stars on GitHub, an outsized figure for a project only a few months old.

Three pieces make it different from a traditional chatbot:

- A local Gateway that runs on your machine and acts as a switchboard: it receives messages from the connected channels, passes them to the model, and orchestrates tool execution.

- Real tools: controlled browser, file read and write, command execution, calendar and email access, customisable skills.

- Scheduled autonomy: standing orders and cron-style tasks. The agent can wake up at a given time, do its work, deliver the result to a channel and go back to sleep.

On top of that, the project proposes a delegate architecture: the agent can have its own identity (email, visible name, calendar) and act on behalf of a person with explicit permissions, without impersonating them. The documentation describes it in three levels:

- Read and draft (the agent prepares, the human sends).

- Send on behalf of (the agent acts under its own identity but with delegated authority).

- Scheduled proactivity (the agent acts on its own initiative following standing orders).

This framework is useful beyond OpenClaw, because it is exactly the pattern the whole category of enterprise agents is moving towards.

Where it stands today

In May 2026, OpenClaw is a live project with a very high change cadence: there are stable, beta and dev versions, with prereleases shipping every few days. That is good for innovation and bad for operational predictability.

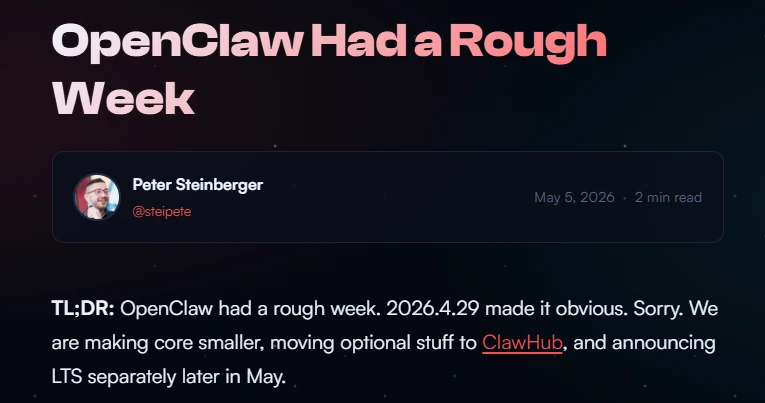

Steinberger himself has publicly acknowledged that the project has gone through tough weeks with stability issues, slow gateways and installations that loop. The team's response is heading in the right direction: smaller core, optional features outside the core, and a long-term support (LTS) track separate from the fast-moving changes. But it isn't there yet.

On 14 February 2026, Steinberger joined OpenAI, and the project moved to an independent foundation with technical and financial backing from OpenAI. The parallel being used is Chromium/Chrome: open-source and community-driven underneath, commercial products on top. The business reading of this move is that OpenAI has seen this is the shape of the personal agent that will reach everyone, and has chosen to back the project rather than compete from scratch.

What it's useful for today

OpenClaw works well when four conditions are met at once: repetitive work, clear channels, a bounded technical perimeter and limited consequences if something fails.

Cases where it fits:

- Internal email triage: the agent reads the inbox, classifies by priority and prepares draft replies.

- Meeting and thread summaries: especially useful for teams who live in Slack/Discord/Telegram and need an operational summary at end of day.

- Daily briefs: agenda, reminders, pending items, internal alerts.

- Monitoring: reviewing logs, internal systems, metrics and flagging anything out of the ordinary.

- Information gathering: basic prospecting, controlled scraping, first-pass lead qualification.

Cases where it would not enter today:

- Payments, approvals, operations with financial impact.

- HR operations with sensitive data.

- Public customer-facing service with the ability to execute outward actions.

- Any process where traceability, segregation of duties and determinism are hard requirements.

Practical rule: if a regular person would feel uncomfortable about an agent making this decision without supervision, it is still too early to put OpenClaw on it.

The real risks

1. Prompt injection. The best-known and still without a full solution. An agent with tools can be manipulated by text injected into emails, links, files or messages. The more autonomy, the wider the blast radius. OpenClaw's own documentation insists on designing under the assumption that the model can be manipulated. OWASP still lists it as the main risk in LLM-based applications.

2. Overprivileged configuration. By default, the main session can have broad access to the system. Exposing the Gateway to the internet is dangerous. Sharing it across multiple low-trust operators is worse. The documentation explicitly recommends one user per machine and one Gateway per trust boundary. OpenClaw is a bad idea as an improvised multi-tenant service.

3. Skills and supply chain. Third-party skills must be treated as untrusted code. The project has worked on this piece (automatic scanning, default blocking on serious findings), but an apparently clean skill can push the agent toward unsafe behaviour purely through language.

4. The agent says it's done… and it isn't. The least-discussed risk and perhaps the most uncomfortable. An agent can report that a task is complete when the actual state of the system says otherwise. This does not invalidate the category. It does explain why selling "full autonomy" today is irresponsible.

5. Real vulnerabilities. Through 2026, advisories and CVEs have been published in the project. It is the logical price of the combination of attack surface + change pace + autonomy + popularity. You have to stay up to date and monitor the official channels before any deployment.

What to check specifically in Andorra

If OpenClaw touches personal data (clients, employees, calendars, mailboxes, documents), it is no longer "trying an AI". It is data processing.

The APDA reminds that the LQPD has been in force since 2022 and sets specific obligations for data controllers, including the appointment of a Data Protection Officer when there is sensitive data or processing with risk to people's rights. In parallel, Andorra Digital places data governance and security at the centre of the national strategy.

For an Andorran company, this translates into a short list of must-haves before considering any autonomous agent:

- Know which data the agent touches and whether it falls into the sensitive category.

- Have an up-to-date record of processing activities.

- Identify whether the processing requires an impact assessment (DPIA).

- Confirm whether a designated DPO is needed.

- Document the legal basis of each processing activity.

This is not decorative paperwork. It is what separates a prudent technical pilot from a sanctionable problem.

When it would make sense, with caution

If a client asks me today for a pilot with OpenClaw, the conversation goes like this:

- A separate system for each company. Not shared with any other client.

- Only the people and channels we approve in advance can come in. Nothing open to the world.

- The agent has its own name and email. It does not pretend to be any real employee.

- The agent only has the minimum tools to do its specific job. If it doesn't need to browse the internet, it doesn't browse. If it doesn't need to send emails, it doesn't send them.

- Any external functionality added is reviewed by us before installation. We don't pull things in and use them directly.

- Every action with consequences is logged and may require human approval before execution.

- We start small, measure whether it brings real value, and only then consider scaling up. No rush.

None of this is over-engineering. It is the reasonable minimum for a system that can do a lot of work, but also a lot of damage if left to roam freely.

The pattern matters more than the product

OpenClaw probably won't be the tool your SME is running three years from now. But the patterns it demonstrates (an own identity for the agent, scheduled autonomy with guardrails, real tools, delegation with explicit permissions) will be in every tool that comes after.

That's why it's worth understanding even if you don't deploy it. The people who grasp these pieces in 2026 will be three steps ahead in 2028.

At Nexe Labs, meanwhile, we don't sell autonomy for its own sake. We propose it when the perimeter is controllable enough that a spectacular demo doesn't turn into a security problem.